Overview of Data Flow Task Plus Configuration

If this is your first time using Data Flow Task Plus to create a dynamic package, please be sure to watch the demonstration videos which illustrate how to configure a package using Data Flow Task Plus. No matter what sources and destinations you’re working with, you can use the following checklist to set up a dynamic package. The steps are intended to be generic and not specific to a particular source or destination.

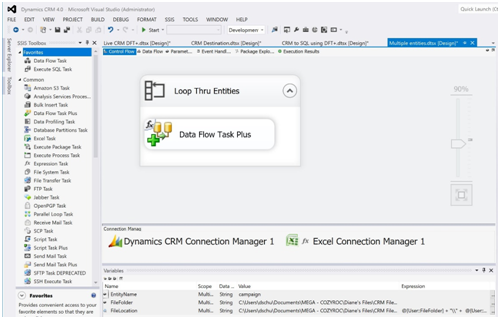

- Use Data Flow Task Plus in place of the standard Data Flow Task.

- It can be found in the SSIS Toolbox next to the Control Flow canvas.

The Data Flow Task Plus editor has a "Dynamic" tab where you can enable dynamic capabilities for the components you want to participate in the dynamic data flow. You would need to set the "Enabled" flag for each of them, and you can configure other component-specific dynamic properties, as well (see the last step for an example).

For each dynamic source and destination component in the data flow, in order to prevent inadvertent metadata refresh possibly leading to losing edits, you will need to disable the validation of external metadata early on:

- Open the Advanced Editor (right-click the component and select “Show Advanced Editor”) and under the "Properties" tab, set “ValidateExternalMetadata” to “False”.

- Tip: Any component that is set to "Enabled" in the "Dynamic" tab of the Data Flow Task Plus editor automatically gets its “ValidateExternalMetadata” set to “False”.

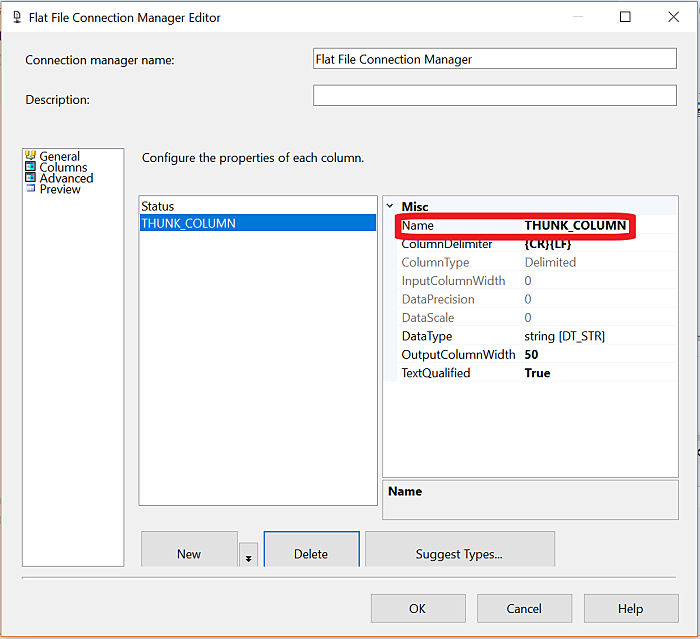

- For some dynamic source and destination components, you will need to replace any statically-defined metadata columns with the THUNK_COLUMN, in order to avoid validation errors:

- Open the "Input and Output Properties" tab of the Advanced Editor. Delete all statically-defined columns and replace them with the THUNK_COLUMN.

- A quicker way to achieve this is to right-click on the component and choose the "Remove static columns" context menu item, if available (introduced in SSIS+ 2.0).

- NOTE: For some components and scenarios, it's possible to leave statically-defined metadata columns, and they would still be refreshed during runtime. However we recommend using the THUNK_COLUMN to have extra clarity that the columns are dynamic.

- For some built-in SSIS source and destination components, the column mappings must be removed before you can remove the columns. This is a requirement when using the OLE DB Source component. There may be other components that behave in this way, also.

- For some sources/destinations (e.g. Flat Files), the static columns must be removed and the THUNK_COLUMN must be set up in the Connection Manager. At this time, the Flat File connection manager is the only known connection manager where this applies.

- After removing the source/destination columns, always edit the components using the Advanced Editor. Using the regular editor can cause all the columns to be refreshed and they will need to be removed again.

- In the Data Flow Task Plus Properties: set “Delay Validation” to “True”.

- To open the Properties, right-mouse click on Data Flow Task Plus and choose “Properties” from the list.

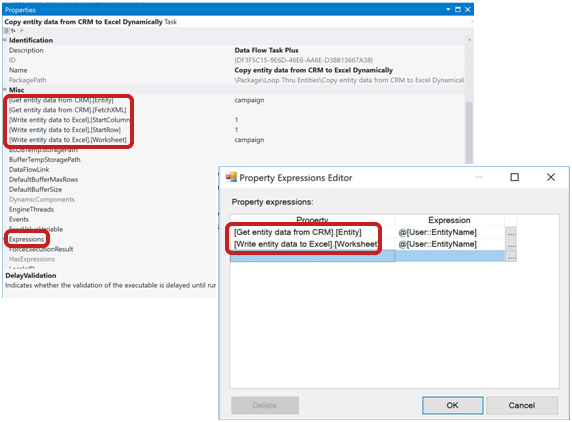

- Finally, enable the dynamic capability for the source and destination components in Data Flow Task Plus and, if necessary, customize any other properties related to their dynamic behavior.

- Get to this screen by opening the editor for Data Flow Task Plus and clicking on the “Dynamic” tab.

Other Configuration Considerations

- To maximize control from outside the package, use variables and expressions for names of elements of the package such as tables, files, entities, worksheets and resources.

- Use a Foreach Loop Container to process any number of files, tables, entities, etc.

- Use a mapping table to specify how to match up columns/fields (if the names don’t match).

If this is the first time you’ve worked with Data Flow Task Plus, we suggest that you start with a simple package and gradually add each element to it in a step by step fashion. Here’s an example of the iterations you might go through to create a package that needs a mapping table and is backing up many files into a database:

- Configure a package that backs up a single file.

- Add a variable for the filename and an expression to pass the filename to the package from outside the package.

- Add a Foreach Loop Container to iterate through multiple files, backing up each one.

- Add the mapping table lookup for columns that need to be explicitly mapped (ie. their source and destination names don’t match).